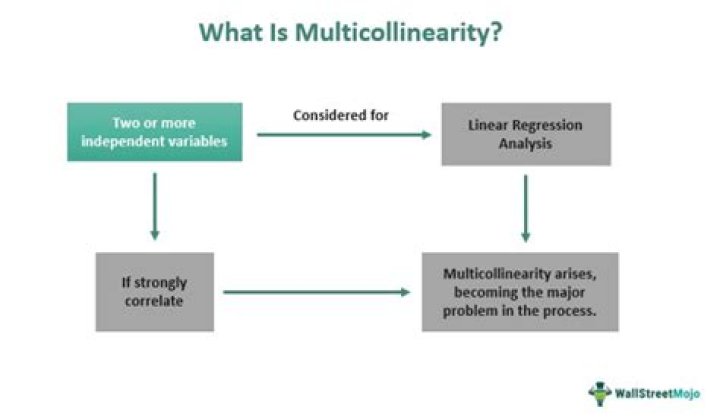

How do you interpret multicollinearity in SPSS?

You can check multicollinearity two ways: correlation coefficients and variance inflation factor (VIF) values. To check it using correlation coefficients, simply throw all your predictor variables into a correlation matrix and look for coefficients with magnitudes of . 80 or higher.

How do you analyze multicollinearity in SPSS?

There are three diagnostics that we can run on SPSS to identify Multicollinearity:

- Review the correlation matrix for predictor variables that correlate highly.

- Computing the Variance Inflation Factor (henceforth VIF) and the Tolerance Statistic.

- Compute Eigenvalues.

How do you interpret multicollinearity results?

View the code on Gist.

- VIF starts at 1 and has no upper limit.

- VIF = 1, no correlation between the independent variable and the other variables.

- VIF exceeding 5 or 10 indicates high multicollinearity between this independent variable and the others.

What is acceptable level of multicollinearity?

According to Hair et al. (1999), the maximun acceptable level of VIF is 10. A VIF value over 10 is a clear signal of multicollinearity.How do you fix multicollinearity in SPSS?

How to Deal with Multicollinearity

- Remove some of the highly correlated independent variables.

- Linearly combine the independent variables, such as adding them together.

- Perform an analysis designed for highly correlated variables, such as principal components analysis or partial least squares regression.

Understanding and Identifying Multicollinearity in Regression using SPSS

How do you know if multicollinearity is a problem?

In factor analysis, principle component analysis is used to drive the common score of multicollinearity variables. A rule of thumb to detect multicollinearity is that when the VIF is greater than 10, then there is a problem of multicollinearity.What VIF value indicates multicollinearity?

Generally, a VIF above 4 or tolerance below 0.25 indicates that multicollinearity might exist, and further investigation is required. When VIF is higher than 10 or tolerance is lower than 0.1, there is significant multicollinearity that needs to be corrected.How do you interpret VIF results?

In general, a VIF above 10 indicates high correlation and is cause for concern. Some authors suggest a more conservative level of 2.5 or above.

...

A rule of thumb for interpreting the variance inflation factor:

- 1 = not correlated.

- Between 1 and 5 = moderately correlated.

- Greater than 5 = highly correlated.

What is considered high multicollinearity?

Multicollinearity is a situation where two or more predictors are highly linearly related. In general, an absolute correlation coefficient of >0.7 among two or more predictors indicates the presence of multicollinearity.What is considered a high VIF?

The higher the value, the greater the correlation of the variable with other variables. Values of more than 4 or 5 are sometimes regarded as being moderate to high, with values of 10 or more being regarded as very high.What do VIF values mean?

Variance inflation factor (VIF) is a measure of the amount of multicollinearity in a set of multiple regression variables. Mathematically, the VIF for a regression model variable is equal to the ratio of the overall model variance to the variance of a model that includes only that single independent variable.How multicollinearity affects the regression results?

1. Statistical consequences of multicollinearity include difficulties in testing individual regression coefficients due to inflated standard errors. Thus, you may be unable to declare an X variable significant even though (by itself) it has a strong relationship with Y. 2.How do you test for multicollinearity in SPSS logistic regression?

One way to measure multicollinearity is the variance inflation factor (VIF), which assesses how much the variance of an estimated regression coefficient increases if your predictors are correlated. A VIF between 5 and 10 indicates high correlation that may be problematic.What does VIF of 1 mean?

A VIF of 1 means that there is no correlation among the jth predictor and the remaining predictor variables, and hence the variance of bj is not inflated at all.What is r square in VIF?

Each model produces an R-squared value indicating the percentage of the variance in the individual IV that the set of IVs explains. Consequently, higher R-squared values indicate higher degrees of multicollinearity. VIF calculations use these R-squared values.What is VIF in SPSS?

One way to detect multicollinearity is by using a metric known as the variance inflation factor (VIF), which measures the correlation and strength of correlation between the predictor variables in a regression model.What is the best way to identify multicollinearity?

A simple method to detect multicollinearity in a model is by using something called the variance inflation factor or the VIF for each predicting variable.How do you deal with multicollinearity in regression?

How Can I Deal With Multicollinearity?

- Remove highly correlated predictors from the model. ...

- Use Partial Least Squares Regression (PLS) or Principal Components Analysis, regression methods that cut the number of predictors to a smaller set of uncorrelated components.